Erick Buenrostro

Digital Resource & Content Specialist

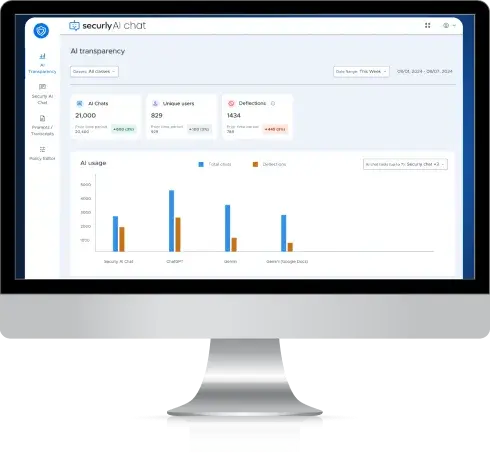

When AI tools swept through classrooms, Ysleta ISD refused to look away or simply block access. Partnering with Securly, the district gained real-time visibility into student AI conversations across platforms, reduced weekly deflections—instances where the AI steers a conversation away from a policy-violating topic or, if the student persists, refuses to engage further—from 46,000 to under 6,000, and built a data-driven framework that redirects students to safe, approved AI tools instead of shutting down innovation. Today, nearly 25,000 productive student AI chats happen each week, and the district can see every one of them.

Digital Resource & Content Specialist

Weekly deflections reduced after implementing Securly's AI guardrails and redirect

Drop in AI deflections after the first week of turning on Securly's AI Transparency Solution

In Securly's AI Transparency Solution, a deflection is an instance where the AI steered a student's conversation away from a policy-violating topic or, if the student persisted, refused to engage with the topic further.

A high volume of AI usage paired with a low deflection rate is a strong indicator of success: it means students are overwhelmingly using the technology to learn, create, and innovate, rather than to test boundaries.

Student AI conversations per week

Comprehensive AI guidance framework developed over 1+ year

A 34,000-student border district knew students were using AI but couldn't see how

As AI tools exploded in popularity, Ysleta ISD's technology team, led by Digital Resource & Content Specialist Erick Buenrostro, knew their students were already on ChatGPT, Gemini, Copilot, and other platforms. For a district deeply committed to responsible technology use, the question was never if students were using AI — it was how. YISD needed data to answer that question responsibly.

“It is not surprising to see such a vast adoption of artificial intelligence tools, very similar to that of social media. When social media came around, it exploded. AI was no different, especially because it was fueled during a time where people were isolated for a large majority of their time.” — Erick Buenrostro

Buenrostro and his team had spent years researching AI, participating in webinars, reading white papers, and studying the privacy and security landscape well beyond the buzzwords. But research alone wasn't enough. They needed actual visibility into what was happening on student devices.

“It's one thing to say, yes, we can see it on our logs, but it's another thing entirely to be able to say, let's go look at the conversation. Let's look at what that looks like over time, because that matters.”

The district could see that students were accessing AI tools, but without the ability to view actual conversations, understand usage patterns over time, or distinguish between productive learning and misuse, they were flying blind. Simply blocking AI wasn't an option for a district committed to preparing students for a workforce already being transformed by the technology.

“World Economic Forum suggests that over eighty percent of jobs have already changed to embed AI. Corporate has changed. Education is doing the same. So we cannot afford to look away and be afraid of technology, for the sake of our kids' futures.”

Securly's AI Transparency Solution gave YISD the visibility and guardrails to embrace AI safely

When Securly launched its AI Transparency Solution, Buenrostro immediately recognized what set it apart: no other tool on the market provided actual visibility into student AI chat conversations across multiple platforms.

“It's a very unique tool because not everybody has it. I think it was the only tool at the time that had access to actual chat conversations.”

Turning it on was instant. Through Securly Filter's policy editor, Buenrostro enabled the AI Transparency Solution the same day he saw the demo, with no complex deployment and no disruption to the school day.

“We went to a webinar and said, hey, you have this feature. It's as simple as flipping a switch. Tell us where you want it on and start collecting data, and that's what we did that very same day. And within a week, we already had a lot of historical data that we could access to start seeing what our students were using it for.”

Beyond simple setup, Securly gave the district the ability to customize guardrails by grade level, choose which AI tools to allow, and set up deflection responses aligned with YISD's own policies, all within the platform they were already using for web filtering.

Rather than blocking AI outright, YISD took a deliberate,

data-informed

approach built on three pillars:

The district used Securly's AI Transparency Solution to collect two full months of usage data before making any policy changes. They studied how students were actually engaging with AI tools, whether use was transactional, educational, or misuse, before deciding what to do about it.

“Our district operates exclusively on data, and the decisions we take are based off of the data that we see. We're very proud of our approach with that.”

Using Securly Filter's AI guardrails, YISD redirects students to approved, safe AI tools rather than cutting off access entirely. Students now have two approved options: Securly AI Chat (available to all students, and the primary tool for elementary) and Gemini (for middle and high school students). When a student tries to access an unapproved AI tool, Securly Filter redirects them to these approved options.

“We are not blocking the use of artificial intelligence. We don't want to block innovation, but we are definitely going to redirect our kids to what is safest.”

“A lot of filters will block, but they don't provide the redirect. And that, I think, is a very important feature.”

YISD uses Securly's policy controls to set different levels of AI access based on grade band. Before approving any tool, YISD's team logged into student accounts and tested every scenario firsthand — seeing exactly what students would see, including how Securly's guardrails and content deflections would respond.

“We thought of scenarios that we would see kids typing into these chatbots, and we went and tried them ourselves from the perspective of a student account that is under the same umbrella of filters and safety and security. So we understood exactly what the student was gonna see, what our educator was gonna see when the student tried that in the classroom, before we said, yes, you can use this in the classroom.”

Deflections plummeted, positive AI usage climbed, and YISD has the complete picture to prove it

Immediate, measurable impact. After enabling Securly's AI Transparency Solution and implementing guardrails through Securly Filter, Buenrostro tracked the data week over week and shared it directly with district leadership:

“I sent them the information of the week prior, five days, Monday through Friday. This is where we sit prior to having implemented anything. Then I showed them the very first week we implemented. It had already gone down by 90%. Then I sent them the next week, even further. And the week after that, even further.”

Before Securly implementation:

deflections per week

Current state:

Under

deflections, a reduction of approximately 87%

What's even more telling is what happened next. After the initial drop, some students disengaged from AI altogether, assuming it had been blocked. But as YISD continued educating students and teachers about productive AI use, chat volume climbed back up while deflections stayed low.

“Our conversations significantly dropped off, because as students figured out, well, I can't do certain things anymore, they get bored of it. But then we started seeing our conversations go back up, but our deflections stayed low. Because then our students started figuring out, well, hey, I can still use it for an intended true purpose of AI. I can still use it for education, for ideas, for creativity, just in appropriate safe ways.”

One of the most powerful aspects of Securly's AI Transparency Solution, according to Buenrostro, is the ability to see a student's full digital picture. Rather than viewing a single concerning prompt in isolation, educators can access the entire context across AI platforms, web activity, and other digital tools.

“Things like Securly's insights, where you're able to see the history, not just for Securly AI Chat, but also for the other major chatbots and LLMs, is huge because you're not leaving gaps in what is the full picture of a student and how they're using it.”

This cross-platform visibility also protects students from being falsely accused. When educators can see exactly what AI tools a student did or didn't use, it creates accountability that works in both directions.

“We wanna avoid situations where a student might be mistakenly accused of using artificial intelligence when it was truly their creativity. So tools like Securly's insights give us balance.”

YISD's approach has been anchored in student safety from the beginning, a priority set at the highest level of district leadership and operationalized through Securly's platform. The district uses Securly's existing chain of command and alert systems to handle safety-related deflections. When AI conversations flag for self-harm, violence, or cyberbullying, trained personnel (not classroom teachers) are notified and follow established intervention protocols.

“From day one, our superintendent was very clear that if we're gonna move forward with artificial intelligence in our schools, we're doing it with safety as the number one priority. We have an incredible group of people, a chain of command that exists for the forty-six schools that belong to the district as to how those deflections are handled, on a case-by-case basis. Whether it's a cry for help, whether it's something repetitive that now requires a follow-up, it takes a village and the right tools.”

YISD's framework took over a year to build, but every district has to start somewhere. Buenrostro's advice is clear: get visibility first, then let the data guide your decisions.

“It comes down to data. You lose nothing by looking at that data. The world is moving in that direction. AI is here to stay.”

Blocking AI at the district level doesn't make the problem go away. Students will find it on personal devices, through social media, and in the EdTech tools already embedded in your classrooms.

“If I block it on a district level, they're gonna pull out a cell phone when they get home and use it. It's not gonna stop. It's embedded in social media, in web searches, it's embedded everywhere they look. We just can't pretend to not see it.”

Buenrostro also flags a blind spot many districts overlook: existing EdTech tools are already embedding AI, and current data-sharing agreements may not cover AI-specific data practices.

“Everybody's using the word AI like nobody's business. What are they doing with your data? Even if you have a data sharing agreement, it protects one part of your platform, but it doesn't necessarily imply that it's protecting your AI data.”

The first step is gaining visibility into what's already happening on your student devices. The second is building the policy framework to support it. Securly can help with both.

Get Securly Filter with AI Transparency tools set up in your district.

Download a starting guide to help your district begin building its own AI use framework.

“Districts that choose to block and not really invest the time and energy are doing a disservice to students.”

Buenrostro's message to cautious superintendents is direct: start with research, use data to drive decisions, and partner with a safety-first platform like Securly to gain the visibility you need. Blocking AI at the district level doesn't make the problem go away.

“These are the people that lead the future. And so we need to educate our people into using it at the appropriate times, knowing when that limit exists. Do your research, invest, have these conversations with companies, because it's now or never.”